How To Use The “extension” Feature To Build Long Narratives

The landscape of generative video has shifted dramatically. In the early days, we were confined to the “five-second wall”—a frustrating limitation where AI video generators would cut off just as the story began to get interesting. However, as we move through 2026, the introduction and refinement of the “Extension” feature have fundamentally changed the game for filmmakers, creators, and digital storytellers, thanks to sophisticated generative AI models powering these advancements.

No longer are we limited to fleeting, disconnected clips. By leveraging advanced tools like Google’s Veo 3.1, Seedance 2.0, and Kling.ai, creators can now stitch together seamless, multi-minute narratives. This guide will walk you through the technical essentials, strategic planning, and best practices for building long-form, cinematic AI stories, enhancing visual storytelling using extension technology.

Understanding the “Extension” Paradigm

At its core, the Extension feature is an AI-driven process of video synthesis that analyzes the final frames of an existing video clip to predict and generate subsequent movement, environment, and character actions. Instead of starting a new prompt from scratch—which often leads to inconsistent character designs or jarring lighting shifts—the extension tool acts as a bridge.

In 2026, the underlying machine learning algorithms have become significantly more “context-aware.” When you trigger an extension, the AI isn’t just guessing the next frame; it is performing a temporal analysis of the physics, lighting, and spatial orientation established in your base clip. This allows for the creation of long, flowing sequences that feel like traditional cinematography rather than a series of AI hallucinations.

Step-by-Step: Building Narratives with Veo 3.1 and Google Flow

The Veo 3.1 Scene Extension feature has become the gold standard for high-quality, professional-grade cinematic production and storytelling. Accessed via Google Flow, this workflow allows you to link multiple distinct camera movements into one cohesive sequence.

1. Preparing Your Base Layer

Always start with a high-quality, 5–10 second “foundation” clip. Ensure your prompt is descriptive and includes specific camera instructions (e.g., “slow tracking shot,” “pan left,” “dolly zoom”). This base acts as the DNA for your entire narrative.

2. Executing the Extension

Once your base clip is rendered, locate the “Extend” button within the Google Flow interface. You will be prompted to either allow the AI to “Auto-Continue” or provide a Custom Prompt for the next segment. For long-form storytelling, I highly recommend the custom prompt approach to maintain narrative control.

3. Managing Transitions

The secret to seamless storytelling is the overlap strategy. When extending a scene, the model looks at the last 15–30 frames. If you want a character to walk from a forest into a mountain pass, your extension prompt should explicitly state: “The character continues walking, transitioning from the dense forest into an open mountain pass, maintaining the same lighting and character attire.”

Advanced Strategies for Long-Form Continuity

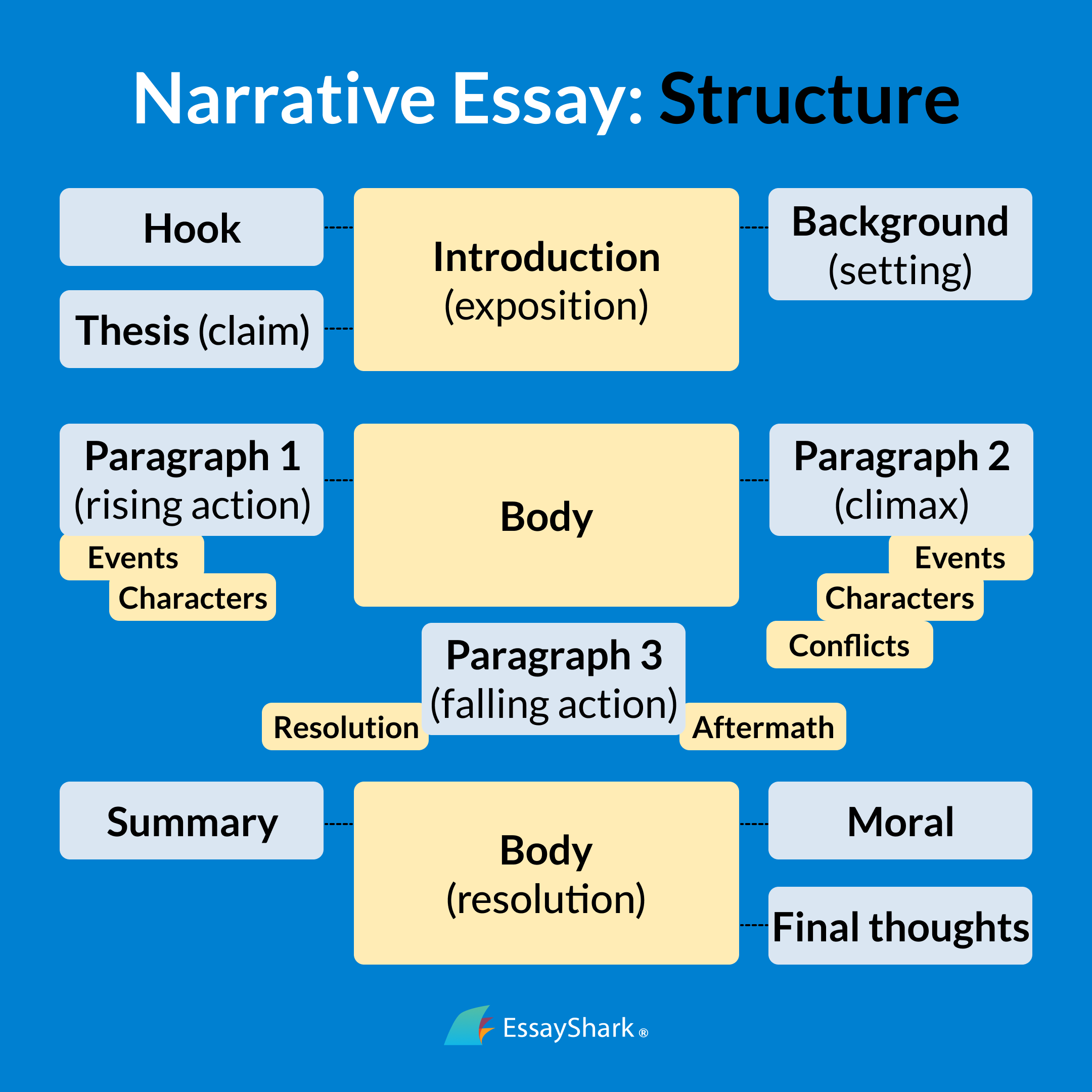

Building a 3-minute video is not just about pressing “Extend” repeatedly. It requires a robust narrative structure and strategic planning to prevent the AI from “drifting”—a phenomenon where the character’s face or the environment slowly changes over time.

The “Anchor Frame” Technique

If you are working on a particularly long project, don’t just rely on the AI’s memory. Save specific “anchor frames” every 15 seconds. If the AI begins to deviate from your style, you can re-upload your anchor frame and use it as a Reference Image in your next extension prompt. This effectively “resets” the AI’s memory to your original vision.

Balancing “Auto-Extend” vs. “Customized Extend”

Auto-Extend: Best for simple environmental shots, such as a camera panning across a landscape or water flowing down a river. It is fast, cost-effective, and requires zero creative input.

Customized Extend: Essential for character-driven narratives. By inputting specific prompts, you control the narrative arc. If a character is meant to pick up a book, the extension prompt should detail the exact hand movement to ensure the AI doesn’t just generate a generic motion.

Technical Essentials and Cost Optimization in 2026

As we navigate the 2026 AI ecosystem, compute resources are becoming more accessible but still carry costs. To master the art of long-form creation and optimize your content creation workflow without breaking the bank, follow these technical best practices:

Segmented Generation: Break your story into “scenes” rather than trying to generate a 3-minute video in one go. Export your 15-second extensions, then use a dedicated NLE (Non-Linear Editor) like Adobe Premiere or DaVinci Resolve to stitch them together.

Resolution Management: Many extension tools default to lower resolutions to save compute. Always check your settings to ensure the “Upscale” feature is toggled on during the final extension phase.

Prompt Chaining: Keep a master document of your prompt structure. If your story involves a specific protagonist, ensure that their physical description is included in every single extension prompt to maintain character consistency.

Overcoming the “AI Hallucination” Barrier

Even with the best tools, AI can occasionally produce “glitchy” results during an extension. If the AI begins to distort a character’s face or morph the environment, do not panic.

- Backtrack: Delete the last 5 seconds of the extension.

- Adjust the Prompt: Often, the issue is that the AI was given too much creative freedom. Add “high fidelity,” “stable character,” or “cinematic focus” to your extension prompt to constrain the model.

- Use Seedance 2.0’s “Fixed Frame” Mode: If available, use the “Fixed Frame” mode which allows you to lock in specific elements of the scene while letting the AI generate only the moving parts. This is the ultimate tool for preventing unwanted morphing.

The Future of AI Storytelling

As we look toward the latter half of 2026, we are seeing the rise of automated narrative pipelines. Platforms like Kling.ai, among other advanced AI video editing software, are already experimenting with “Auto-Extend” features that can generate up to 3 minutes of continuous footage based on a single, well-structured script.

The role of the creator is shifting from “editor” to “director.” You aren’t just typing prompts; you are managing a digital production crew. By mastering the Extension feature, you are positioning yourself at the forefront of a new medium—one where the only limit to the length of your story is the depth of your imagination.

Conclusion

Building long-form narratives with AI in 2026 is no longer a technical impossibility; it is a refined skill. By understanding how to properly use the Extension feature, utilizing anchor frames, and strategically balancing custom prompts with auto-generation, you can create immersive, multi-minute experiences that captivate audiences.

Whether you are an independent filmmaker looking to prototype scenes, a marketer creating long-form brand stories, or a creative writer exploring the visual medium, the tools are now in your hands. Start with a strong base, maintain your character consistency through anchor frames, and don’t be afraid to experiment with the nuances of each platform. The era of the short-form AI clip is over—the era of the AI-generated epic has begun.

Advanced Strategies for Narrative Cohesion

While anchor frames establish a foundation, achieving true narrative cohesion across dozens or even hundreds of extended clips demands more sophisticated techniques. Think of it as conducting an orchestra: each instrument (clip) must play in harmony with the rest, guided by a singular vision.

Prompt Engineering for Granular Control: Beyond simple descriptions, advanced prompt engineering techniques involve explicitly referencing prior states and desired continuities. For instance, instead of just “The knight enters the forest,” a more effective extension prompt might be: “Continuing from the previous shot, the knight, still wearing his ornate silver armor and bearing the distinctive dragon crest on his shield, cautiously steps into the ancient, mist-shrouded forest. The dappled sunlight filters through the canopy, illuminating the path ahead.” Notice the specific details reinforcing character appearance, equipment, and environmental consistency. Platforms are increasingly adept at processing these complex, multi-clause prompts, allowing creators to dictate not just what happens next, but how* it relates to what came before. Experiment with negative prompts as well (“no sudden costume changes,” “avoid introducing new characters”) to prevent unwanted deviations.

Leveraging Iterative Refinement and Seed Manipulation: Professional AI video artists often don’t accept the first extension. Instead, they engage in an iterative process. They might generate several variations of an extension, selecting the one that best maintains continuity and narrative intent. Furthermore, subtle manipulation of the seed image or video frame before extension can dramatically influence the outcome. This could involve minor color grading adjustments to maintain a consistent mood, cropping to reframe the shot slightly, or even using a light touch of inpainting to fix a minor visual anomaly that might otherwise propagate into future extensions. This level of manual intervention, though seemingly counter-intuitive for AI tools, empowers the creator to course-correct the AI’s tendencies.

The Power of the “Character Bible” and Environment Guides: For truly long narratives, a “character bible” becomes invaluable. This isn’t just a textual description; it can include specific reference images of your protagonist from various angles, in different emotional states, and with consistent attire. Some advanced AI platforms allow you to upload these character sheets as supplementary reference material that the AI can draw upon during generation, significantly boosting consistency. Similarly, creating “environment guides” – a series of reference images or detailed textual descriptions of key locations – ensures that your settings remain visually coherent throughout the epic journey, preventing the uncanny shifts in architecture or foliage that can break immersion. This systematic approach transforms AI from a random generator into a highly customizable storytelling engine.

Weaving Cinematic Language into AI Narratives

The “Extension” feature isn’t just about making longer clips; it’s about building cinematic sequences. This means integrating traditional filmmaking principles into your AI workflow.

Mastering Pacing and Shot Dynamics: A compelling narrative isn’t a continuous wide shot. It requires variation in pacing and shot composition. Use rapid, shorter extensions to create dynamic action sequences or quick cuts, mirroring traditional editing techniques. Conversely, longer, more gradual extensions can build tension, establish atmosphere, or allow for character introspection. Intentionally vary your shot types – moving from a wide establishing shot to a medium shot focusing on character interaction, then to a close-up revealing emotion. Prompt the AI not just for the action, but for the camera angle and movement: “Extend with a slow push-in on the protagonist’s worried face,” or “Transition to a sweeping crane shot revealing the vast battlefield.” This conscious direction elevates your AI narrative from a series of clips to a thoughtfully constructed film.

The Unseen Partner: Planning for Sound and Music: While AI video tools primarily generate visuals, a long narrative is incomplete without a robust sound design. As you plan your visual extensions, consider the auditory landscape. Are you generating a quiet, contemplative scene? Plan for ambient sound and subtle music. Is it an intense action sequence? Anticipate the need for impactful sound effects and a driving score. While AI tools are beginning to offer rudimentary sound generation, the true power of sound still lies in post-production. By consciously designing your visual extensions with sound in mind – leaving visual “space” for dialogue, ensuring actions have clear visual cues for sound effects – you create a more complete and immersive experience. This foresight bridges the gap between AI generation and professional post-production workflows.

Navigating the Nuances: Overcoming Common Extension Challenges

Despite the advancements, building long narratives with AI extension tools presents unique challenges that require strategic solutions.

Combating “Narrative Drift” and Stylistic Inconsistency: One of the most common issues in long-form AI generation is “narrative drift,” where characters or environments subtly change over time, diverging from their initial appearance. This can be exacerbated by “stylistic inconsistency,” where lighting, color palette, or even the overall aesthetic shifts between extended segments. The best defense against drift is frequent re-anchoring. Regularly re-evaluate your base frame and, if necessary, regenerate a segment from an earlier, more consistent point, or use a stronger, more detailed anchor frame. For stylistic consistency, dedicate specific prompt elements to visual style (“cinematic, noir lighting,” “vibrant, painterly aesthetic”) and apply them rigorously across all extensions. Consider creating a “style guide” with reference images to help the AI maintain a consistent visual language. Furthermore, some platforms offer “style transfer” functions that can help unify disparate segments.

Workflow Optimization for Epic Scale: Generating an epic narrative isn’t just about creativity; it’s about project management. A single minute of AI-generated video can comprise dozens of individual extensions, each with multiple iterations. Efficient workflow is crucial. Implement a clear naming convention for your clips (e.g., `Scene1Shot3Take2ExtvA`). Utilize project folders to organize different scenes, character assets, and prompt variations. Leverage cloud storage for large video files and processing power. Consider batch processing capabilities if available, and understand the rendering times for your chosen platform. As the AI video market continues its rapid expansion, projected to reach over $500 million by 2028 according to recent industry analyses, the demand for scalable, efficient workflows will only grow. Treat your AI narrative project like a mini-studio production, complete with careful planning and robust asset management.

The Future is Now: Ethical Dimensions and the Evolving Role of the Creator

The advent of AI video extension profoundly impacts not just how stories are told, but also the very concept of authorship and the role of the human artist.

Authorship, Ownership, and the Creative Frontier: As AI tools become more sophisticated, the lines between human creation and machine assistance blur. Who owns the copyright to an AI-generated epic? Is it the prompt engineer, the platform developer, or a shared ownership? These questions are at the forefront of legal and ethical discussions. While current legal frameworks often lean towards human authorship for creative expression, the evolving capabilities of AI necessitate new considerations. Creators must be aware of the terms of service for the platforms they use, as these often dictate ownership and usage rights. This new frontier requires ongoing dialogue and adaptation from creators, platforms, and legal bodies alike.

The Indispensable Human Element: Despite the power of AI, the human creator remains the indispensable visionary. AI is a tool, albeit an incredibly powerful one, but it lacks intent, emotion, and the nuanced understanding of narrative structure that defines compelling storytelling. It cannot conceive the initial spark, nor can it truly understand the emotional resonance of a character’s journey. The human director, writer, and artist are still the architects of the story, shaping prompts, making critical choices, and guiding the AI’s output towards a coherent, emotionally impactful narrative. The AI handles the heavy lifting of visual generation; the human provides the soul. This synergy is where the true magic happens, allowing individuals and small teams to achieve cinematic visions previously only accessible to large studios. The era of the AI-generated epic isn’t about replacing human creativity; it’s about amplifying it, democratizing filmmaking, and unlocking unprecedented possibilities for visual storytelling.

The journey into long-form AI narratives is just beginning, and the “Extension” feature is a cornerstone of this revolution. It demands not just technical proficiency but also a deep understanding of storytelling, visual language, and iterative refinement. By embracing these principles, creators can move beyond short-form experiments and begin crafting the next generation of immersive, expansive, and deeply personal stories, pushing the boundaries of what’s possible in the realm of visual media. The tools are here, the canvas is infinite, and the only limit is the storyteller’s imagination.