A Guide To The Safety And Ethics Of Using Veo 3.1 For Business

As we navigate the transformative landscape of generative AI in 2026, Google’s Veo 3.1 has emerged as the gold standard for high-fidelity video production. For agencies, marketing departments, and independent creators, the ability to generate cinematic-quality footage from text prompts is a game-changer. However, with great power comes the requirement for responsible AI deployment, guided by robust ethical AI principles.

This guide serves as A guide to the safety and ethics of using Veo 3.1 for business, exploring the safety frameworks, ethical considerations, commercial licensing requirements, and critical aspects of AI regulatory compliance that businesses must understand to leverage Veo 3.1 effectively and compliantly.

Understanding the Veo 3.1 Safety Architecture

The backbone of Veo 3.1’s professional utility, a key aspect covered in A guide to the safety and ethics of using Veo 3.1 for business, is its robust safety architecture. Unlike early-stage generative models that operated in a “wild west” environment, Veo 3.1 is built on the Responsible AI for Veo on Vertex AI framework, embodying strong AI governance frameworks. This system is designed to prevent the generation of harmful, illegal, or sexually explicit content through sophisticated, multi-layered filters.

The Role of Safety Filter Codes

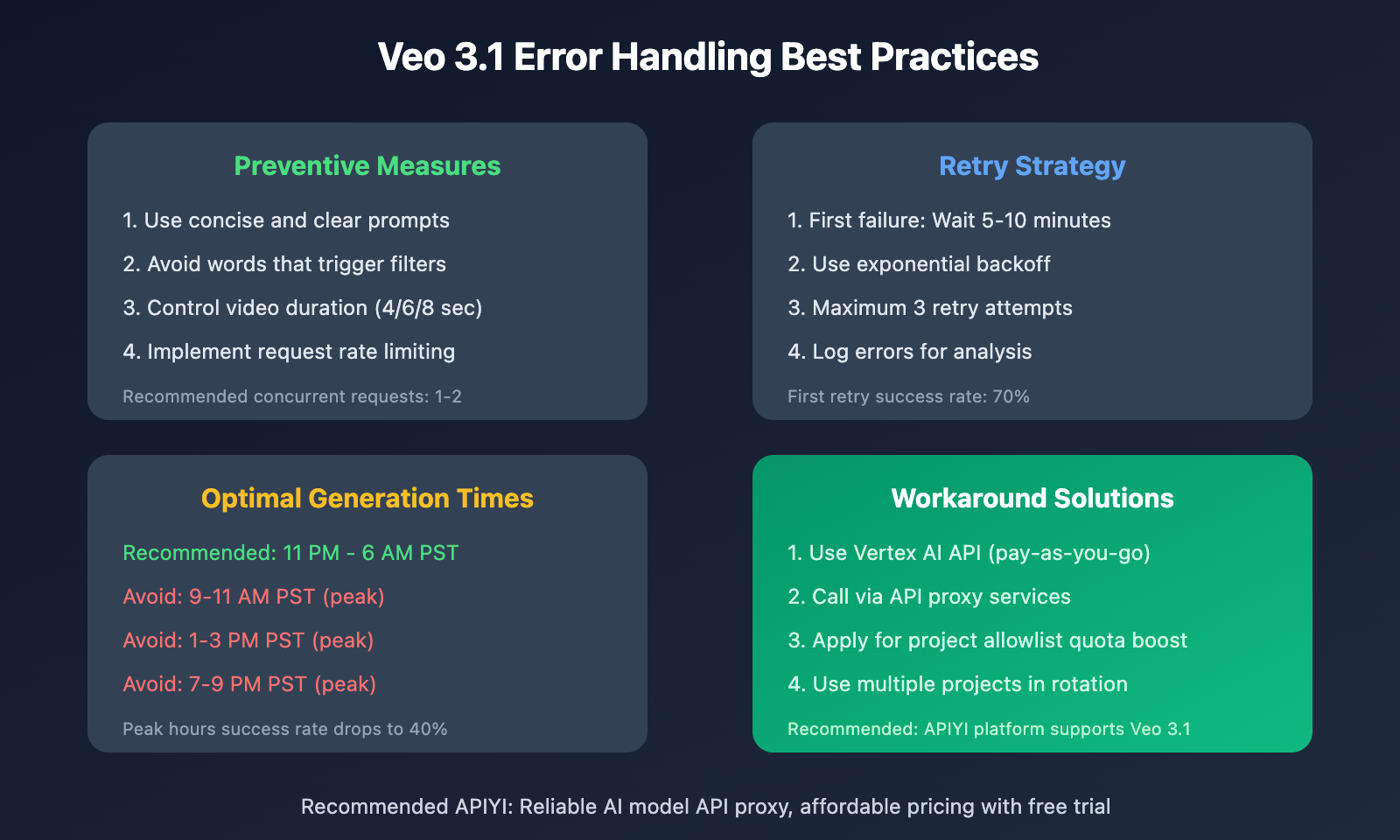

When you interact with the model, the system performs real-time analysis on both your input prompts and the resulting video output. If a generation is blocked, you may encounter specific safety filter codes. These codes are not merely “errors”; they are indicators that the model has successfully identified a policy violation, reflecting robust content moderation strategies.

Businesses should view these filters as a protective shield, a crucial element for anyone consulting A guide to the safety and ethics of using Veo 3.1 for business. By preventing the creation of prohibited content, Google ensures robust brand safety and reputation management, so corporate brands do not inadvertently associate their reputation with controversial or unsafe visual assets.

Commercial Licensing and the Ethics of Attribution

A primary concern for any business adopting AI-generated video, and a core topic in A guide to the safety and ethics of using Veo 3.1 for business, is the commercial rights landscape, closely tied to effective digital rights management. As of 2026, Google has clarified that content generated through commercial Vertex AI accounts is eligible for commercial use, provided it adheres to the updated Terms of Service.

SynthID and Digital Provenance

One of the most critical ethical components of Veo 3.1 is the integration of SynthID, a step towards Explainable AI (XAI). This invisible digital watermark is embedded directly into the video file during the generation process. For businesses, this serves two purposes:

- Transparency: It allows platforms and users to identify that the content was AI-generated, fostering trust with your audience.

- Copyright Protection: It serves as a form of digital provenance, helping to verify the origin of your assets in a crowded digital marketplace.

Ethical business practice in 2026 demands that companies disclose the use of AI in their marketing campaigns. Utilizing SynthID-watermarked content, as detailed in A guide to the safety and ethics of using Veo 3.1 for business, is a proactive step toward maintaining consumer trust and adhering to emerging global regulations and AI ethics guidelines regarding AI labeling.

Technical Improvements: Veo 3.1 vs. Legacy Models

The transition from Veo 3 to Veo 3.1 has been marked by significant improvements in narrative control and audio quality, aspects that enhance the overall utility discussed in A guide to the safety and ethics of using Veo 3.1 for business. For businesses, this means less time spent on “prompt engineering” and more time focusing on creative output.

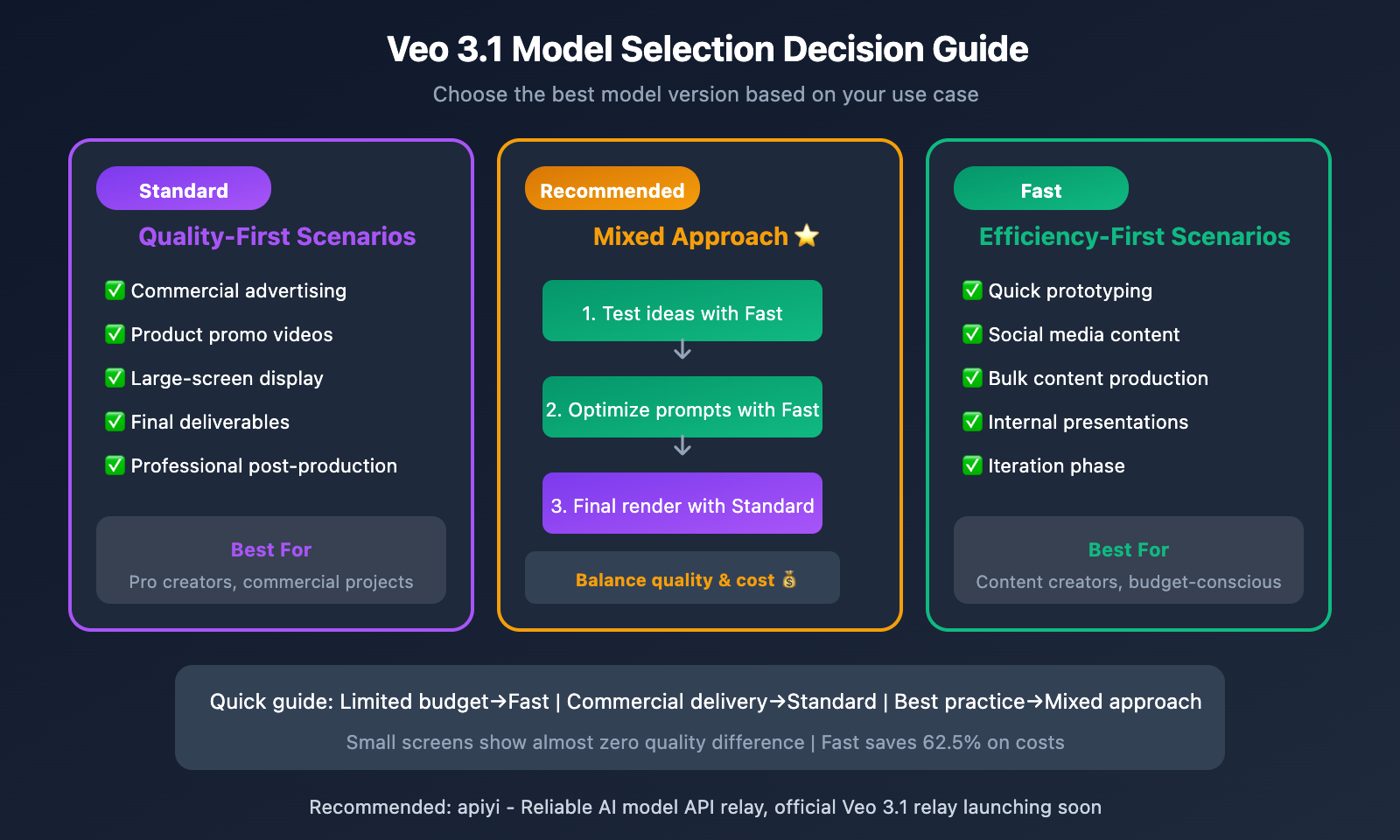

When choosing between the standard Veo 3.1 model and the “Fast” variant, businesses must balance speed with complexity. While the “Fast” model is excellent for quick storyboarding and social media content, the standard Veo 3.1 model provides superior adherence to complex scripts and brand-specific visual requirements.

Mitigating Societal Risks: Insights from the Veo 3 Technical Report

Google’s Veo 3 Technical Report highlights an exhaustive process of internal red teaming, crucial for informing A guide to the safety and ethics of using Veo 3.1 for business and identifying potential generative AI risks. Before the model was released to the public, hundreds of hours were spent testing it against potential societal risks, including bias, misinformation, and deepfake generation.

Bias and Representation

AI models are trained on vast datasets that can contain inherent societal biases. Google has implemented specific mitigations to ensure that Veo 3.1 provides equitable representation across different ethnicities, genders, and cultural contexts. For a business, this is a critical safety feature, as emphasized throughout A guide to the safety and ethics of using Veo 3.1 for business; using a model that produces biased content is not only an ethical failing but a potential PR disaster.

The “Human-in-the-Loop” Requirement

Despite the high level of automation, the most successful businesses in 2026, as highlighted in A guide to the safety and ethics of using Veo 3.1 for business, are those that maintain a human-in-the-loop workflow, often overseen by dedicated AI ethics committees. Ethical AI usage means that human editors still review, curate, and finalize all AI-generated assets. This ensures that the content aligns with brand voice, legal standards, and community expectations.

Navigating Costs and Resource Allocation

Understanding the cost structure is just as important as understanding the ethics, a balance crucial for any reader of A guide to the safety and ethics of using Veo 3.1 for business. Google’s pricing model for 2026 reflects the high compute cost of generating high-resolution, high-fidelity video.

Businesses should audit their video production workflows to determine where Veo 3.1 adds the most value. Often, the ROI is found in:

Rapid Prototyping: Creating multiple versions of an ad to test audience engagement.

Localization: Generating background visuals for videos in multiple languages without needing to re-shoot.

Asset Scaling: Creating thousands of unique, personalized video assets for hyper-targeted marketing campaigns.

Best Practices for Businesses in 2026

To stay ahead of the curve, your business should implement the following internal policies regarding Veo 3.1, aligning with the recommendations in A guide to the safety and ethics of using Veo 3.1 for business:

- Establish an AI Usage Policy: Clearly define when and where AI-generated video can be used in your marketing materials.

- Prioritize Transparency: If you are using AI to create significant portions of your content, disclose this to your audience in your video descriptions or metadata.

- Continuous Monitoring: Regularly review the Veo 3.1 FAQ and Google’s policy updates. The landscape of AI regulation is shifting rapidly, and what is compliant today may require adjustment tomorrow.

- Data Privacy and Security: Ensure that your prompt engineering does not include sensitive, proprietary, or private customer data. While Google provides strong privacy protections, it is best practice to sanitize all inputs, upholding robust data privacy and security standards.

The Future of Ethical Video Generation

As we look toward the latter half of 2026, the integration of models like Veo 3.1 into the enterprise stack is no longer an experiment; it is a necessity. The businesses that will thrive, as this A guide to the safety and ethics of using Veo 3.1 for business emphasizes, are those that view safety and ethics not as a hurdle, but as a competitive advantage.

By leveraging the built-in safety features, respecting digital provenance through SynthID, and maintaining a commitment to human-led creative oversight, companies can harness the power of Veo 3.1 to tell stories that are not only visually stunning but also ethically sound, as outlined in this comprehensive A guide to the safety and ethics of using Veo 3.1 for business.

Key Takeaways for 2026:

Safety First: Use the built-in safety filter codes to ensure your content stays within brand guidelines.

Commercial Legitimacy: Verify your licensing through Vertex AI to ensure you hold the rights to your generated assets.

Human Oversight: Never bypass the human review process. AI is a tool for the creator, not a replacement for human judgment.

Transparency: Embrace digital watermarking as a way to build trust with your audience.

The evolution of Veo 3.1 represents a massive leap forward in creative technology. By grounding your implementation in the guidelines provided in this guide, you can ensure that your business remains at the forefront of the AI-powered video revolution, safely and ethically.

Practical Implementation Strategies for Ethical Veo 3.1 Use

Moving beyond principles, successful ethical integration of Veo 3.1 demands concrete, actionable strategies within your organization, which is precisely what A guide to the safety and ethics of using Veo 3.1 for business aims to provide. This requires a multi-faceted approach encompassing training, governance, and robust review processes.

Comprehensive Employee Training and Upskilling: It’s insufficient to simply provide access to Veo 3.1. Businesses must invest in thorough training programs that cover not only the technical operation of the tool but, more critically, the ethical implications of its use, as emphasized in A guide to the safety and ethics of using Veo 3.1 for business. Workshops should educate teams on identifying potential biases, understanding copyright nuances in AI-generated content, recognizing the signs of misleading outputs, and applying the company’s specific ethical guidelines. Regular refreshers and updates are essential as the technology and ethical landscape evolve. For instance, a marketing team might learn to scrutinize generated visuals for cultural insensitivity, while a product development team focuses on ensuring generated UI elements adhere to accessibility standards.

Establishing Internal Governance Frameworks: Formalizing an internal structure for AI ethics is paramount. This could involve creating a dedicated AI ethics committee or assigning responsibility to a cross-functional team comprising legal, marketing, product, and technical experts. This committee would be tasked with developing internal policies, reviewing high-stakes projects involving Veo 3.1, and establishing clear escalation paths for ethical concerns. This ensures that ethical considerations are embedded at every stage of the content creation lifecycle, from initial prompt engineering to final publication.

Robust Content Vetting and Review Workflows: No AI-generated content, regardless of its source, should go live without human scrutiny. Implement multi-stage review processes where human editors, fact-checkers, and brand compliance specialists examine Veo 3.1 outputs for accuracy, brand alignment, legal compliance, and ethical soundness. This workflow acts as a critical failsafe, catching potential errors, biases, or misrepresentations that the AI might produce. Consider a “red team” approach where a dedicated group attempts to find flaws or ethical breaches in generated content before it reaches the public.

Mitigating Specific Risks Associated with Generative Video

While Veo 3.1 offers immense creative potential, businesses must proactively address inherent risks associated with generative AI, particularly in video, a core focus of A guide to the safety and ethics of using Veo 3.1 for business.

Addressing Misinformation and Deepfake Potential: The realistic nature of Veo 3.1 outputs, while powerful for legitimate creative purposes, also carries the risk of misuse in generating misleading or deceptive content. Businesses must enforce strict internal policies against creating content that could be misinterpreted as real events, individuals, or news without clear, explicit disclosure. This includes avoiding the generation of “deepfakes” of real people or events unless explicitly authorized and clearly labeled for satirical, artistic, or educational purposes with appropriate consent. Educating employees on responsible disclosure practices for AI-generated content is crucial to maintain audience trust.

Navigating Intellectual Property and Copyright: The legal landscape surrounding AI-generated content and the intellectual property of its training data is still evolving. Businesses must exercise caution regarding the input data used to generate Veo 3.1 content, ensuring it is either original, licensed, or falls under fair use. Consult legal counsel to understand the implications of using Veo 3.1 for commercial purposes, particularly concerning potential claims of copyright infringement related to the model’s training data. Establishing clear guidelines for attributing sources where appropriate and ensuring compliance with existing IP laws is vital to avoid legal entanglements.

Preventing Bias Amplification: Generative AI models learn from vast datasets, which often reflect existing societal biases. Veo 3.1, like any AI, can inadvertently perpetuate or even amplify these biases in its outputs, leading to unfair representation, stereotypes, or exclusionary content. Businesses must actively monitor Veo’s generated content for subtle biases in demographics, cultural portrayals, or narrative framing. Implementing diverse human review teams can help identify and mitigate these nuances, ensuring that the generated video content is inclusive, equitable, and representative of your target audience without reinforcing harmful stereotypes. Regular audits of content for bias are a necessary ongoing practice.

The Indispensable Role of Human Oversight and Collaboration

Despite Veo 3.1’s advanced capabilities, human judgment, creativity, and ethical reasoning remain the bedrock of responsible AI implementation, a principle central to A guide to the safety and ethics of using Veo 3.1 for business. Veo 3.1 is a powerful assistant, not an autonomous creator. Human operators are essential for defining the creative vision, crafting precise prompts, iteratively refining outputs, and, most importantly, applying the final layers of ethical scrutiny and quality assurance. This symbiotic relationship ensures that the technology serves human intent and values, rather than dictating them. It’s a partnership where AI handles the heavy lifting of generation, while human intelligence provides the strategic direction, moral compass, and creative spark that truly resonates with audiences.

Future-Proofing Your Business in the Age of Generative AI

The pace of innovation in AI is relentless, and the ethical and regulatory landscape is continuously shifting. To remain at the forefront, businesses must adopt a forward-looking approach, as advised in A guide to the safety and ethics of using Veo 3.1 for business:

Stay Abreast of Evolving Regulations: Monitor developments in AI ethics guidelines and legislation globally, such as the EU AI Act, the NIST AI Risk Management Framework, and emerging national policies. Proactive adaptation to these evolving standards will prevent costly remediation later and position your business as a responsible industry leader.

Invest in Continuous Learning and Adaptation: Foster a culture of continuous learning within your organization. Encourage teams to stay updated on Veo 3.1’s new features, best practices, and emerging ethical considerations. This includes participating in industry forums, engaging with AI ethics communities, and conducting internal research to understand the implications of new advancements.

Build a Culture of Responsible Innovation: Embed ethical considerations into your company’s DNA. Encourage open dialogue about AI’s impact, celebrate responsible innovation, and empower employees to raise concerns. A proactive, ethical culture is the most robust defense against misuse and the surest path to long-term success with generative AI.

Conclusion

The advent of Veo 3.1 marks a pivotal moment in creative technology, offering unprecedented opportunities for businesses to innovate, scale content creation, and engage audiences in novel ways. However, the true value of this revolution, as explored in A guide to the safety and ethics of using Veo 3.1 for business, lies not just in its technological prowess but in its responsible and ethical application.

Embracing these guidelines, as presented in this A guide to the safety and ethics of using Veo 3.1 for business, is not merely about compliance; it’s a strategic imperative that fosters innovation, builds lasting trust with stakeholders, and establishes a competitive advantage in a rapidly evolving digital landscape. The future of AI-powered video is bright, and by committing to safety and ethics today, your business can confidently lead the charge, shaping a creative future that is both powerful and profoundly responsible.