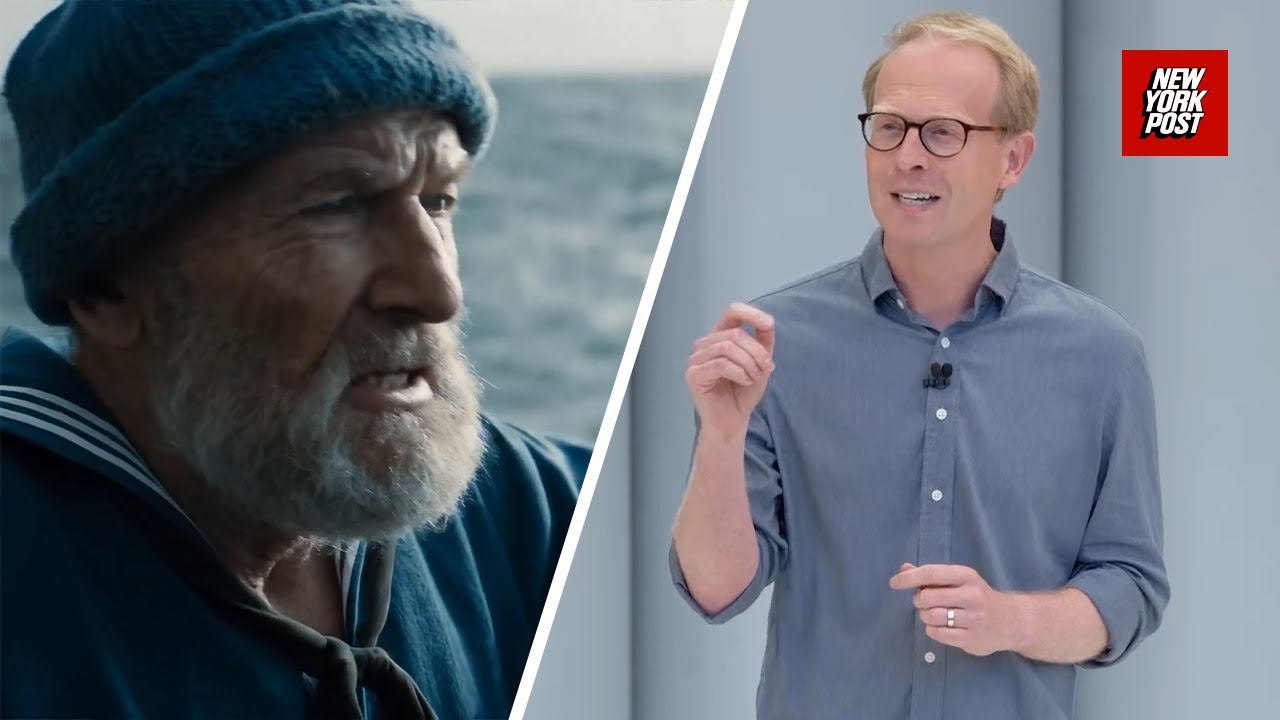

Veo3Generate: The Power of AI Audio: How Veo 3 Creates Realistic Soundscapes

Veo3Generate: The Symphony of Silicon – Weaving Realistic Soundscapes with AI

The human ear, a marvel of engineering, discerns the rustle of leaves, the roar of a waterfall, and the hushed tones of a whispered secret. It’s a symphony of sensation, a constant influx of auditory information that paints the world in vibrant detail. But what if we could recreate that auditory tapestry, not through laborious manual work, but through the sheer power of artificial intelligence? Enter Veo3Generate, a groundbreaking platform poised to revolutionize the way we perceive and interact with sound. This article dives deep into the technology, potential, and impact of Veo3Generate, exploring how it’s crafting breathtakingly realistic soundscapes.

The Alchemic Process: How Veo3Generate Operates

At the heart of Veo3Generate lies a sophisticated AI engine, a digital maestro conducting an orchestra of algorithms. It’s not just about assembling pre-recorded sound effects; it’s about creating them from scratch, weaving intricate sonic textures with remarkable detail.

The core of Veo3Generate relies on Generative AI. Trained on vast datasets of audio, including natural environments, sound effects, and musical instruments, the AI learns the complex relationships between sounds, their characteristics (frequency, amplitude, duration), and their context.

Think of it like this:

-

Input: The user provides text prompts, such as “rain falling on a metal roof,” or a description of a specific scene. This is the “seed” for the AI.

-

Processing: Veo3Generate’s AI analyzes the prompt, understanding the key elements and desired sonic attributes.

-

Generation: The AI draws upon its extensive knowledge base and algorithms to generate the sounds, constructing them layer by layer, mimicking the way sounds naturally interact. This involves:

- Synthesizing realistic sounds: Using various audio synthesis techniques, the AI creates the fundamental sonic components.

- Applying spatial audio: Adding depth and directionality, placing sounds in a three-dimensional space for a truly immersive experience.

- Mixing and mastering: Fine-tuning the audio, ensuring a polished and professional sound.

-

Output: A high-quality, unique soundscape, ready to be used in a variety of applications.

Key AI Techniques Employed:

| Technique | Description | Impact |

|---|---|---|

| Generative Models | Creates new audio from scratch based on learned patterns. | Original & varied outputs, avoidance of repetitive sound loops. |

| Machine Learning | Enables the AI to improve its sound generation capabilities over time. | Enhanced realism, greater adaptability to complex prompts. |

| Neural Networks | Mimics the structure of the human brain for audio processing. | Sophisticated sound analysis, realistic sound reproduction. |

| Spatial Audio | Creates a 3D soundscape, adding depth and directionality. | Enhanced immersion, richer auditory experiences. |

Unleashing Sonic Potential: Applications Across Industries

The implications of Veo3Generate are far-reaching, impacting industries in previously unimaginable ways.

1. Filmmaking & Game Development:

- Problem: Time-consuming & expensive sound design. Limited access to diverse, quality sound effects.

- Solution: Veo3Generate streamlines sound design, enabling quick iterations and generating unique sounds on demand.

- Impact: Accelerated production timelines, reduced costs, and richer, more immersive experiences.

2. Virtual Reality & Augmented Reality:

- Problem: Creating realistic and interactive auditory experiences in VR/AR.

- Solution: Dynamic soundscapes that respond to user interaction, enhancing immersion.

- Impact: More immersive and engaging VR/AR applications, realistic sound environments.

3. Music Production:

- Problem: Limited sample libraries, artist block and creative stagnation.

- Solution: Experiment with new textures and sounds, discover innovative musical ideas.

- Impact: Open doors to experimental and unique music creation.

4. Education & Training:

- Problem: Simulating real-world environments and scenarios.

- Solution: Create realistic and interactive soundscapes for training simulations.

- Impact: Boost learning immersion and enhance scenario realism.

5. Content Creation:

- Problem: Difficulty sourcing unique audio for podcasts, videos, and other media.

- Solution: Produce copyright-free, diverse soundscapes in a flash.

- Impact: Easier content creation for all creators, new possibilities for storytelling.

The Future is Audible: The Evolution of Veo3Generate

The future of Veo3Generate is bright. The AI is constantly improving, with advancements in areas such as:

- Enhanced realism: Continued training on massive audio datasets to boost fidelity.

- Interactive soundscapes: Real-time sound generation that responds to user input.

- Customization: Allowing users to tweak sounds and add creative flourishes.

- Integration: Seamless connection with more software and platforms.

Anticipated developments:

| Feature | Expected Impact |

|---|---|

| Real-time sound generation | Interactive soundscapes responding to actions. |

| User-defined sound parameters | Fine-grained control over sound characteristics. |

| Advanced audio mixing tools | Superior audio quality and customization options. |

| AI collaboration features | User collaboration for shared soundscape creation. |

Ethical Considerations

As with all AI technologies, ethical considerations are paramount. Veo3Generate’s developers must be vigilant about:

- Copyright: Ensuring the AI is trained on properly licensed data and produces sounds that respect intellectual property rights.

- Misinformation: The potential for the technology to be used to create misleading or deceptive audio.

- Accessibility: Ensuring the technology is available to creators of all backgrounds.

Conclusion: A Symphony in the Making

Veo3Generate represents more than just a technological advancement; it embodies a paradigm shift in how we interact with sound. It is democratizing sound creation, empowering creators across various fields to weave sonic tapestries that were once impossible. By unlocking the creative potential of AI, Veo3Generate is composing the soundtrack for a future where the possibilities are limited only by our imagination. The symphony of silicon has just begun.

/cdn.vox-cdn.com/uploads/chorus_image/image/66548193/shutterstock_189435755.0.jpg)

Additional Information

Veo 3Generate: Decoding the Power of AI Audio – A Deep Dive

This analysis explores “Veo 3Generate: The Power of AI Audio: How Veo 3 Creates Realistic Soundscapes,” providing a detailed breakdown of its capabilities, technology, and impact on audio creation.

I. Introduction & Context:

- What is Veo 3? Veo 3 likely refers to a specific version of a software or platform specializing in AI-powered audio generation. It’s a tool designed to create realistic and complex soundscapes for various applications like video games, movies, virtual reality (VR), and potentially even music production.

- Key Concept: AI-Driven Sound Generation: The core of Veo 3’s power lies in its use of artificial intelligence, specifically machine learning models, to generate audio. This distinguishes it from traditional sound design, which relies heavily on manual recording, editing, and manipulation of audio samples.

- The Promise of Realism: The primary goal is to create sounds that convincingly mimic the real world. This includes the complexity of environmental sounds (like rain, wind, or crowds) and the nuanced audio events within them.

- Focus on “Soundscapes”: The term “soundscapes” is central, implying the creation of comprehensive and immersive audio environments. This goes beyond individual sound effects and focuses on the overall auditory experience.

II. Technical Deep Dive: How Veo 3 Creates Realistic Soundscapes

This is where we delve into the likely technologies and processes at play:

-

Machine Learning Models (Likely Deep Learning):

- Architecture: Veo 3 probably employs advanced deep learning models, such as:

- Generative Adversarial Networks (GANs): These models are particularly effective at generating realistic content. A GAN consists of two networks: a generator (creating the sound) and a discriminator (evaluating the realism of the sound). They compete against each other, refining the generator’s output until the discriminator can no longer distinguish between the generated sound and real-world audio.

- Transformer Networks: Transformers, initially developed for natural language processing, have shown promise in audio generation. They can analyze vast datasets of audio, learn complex relationships between sounds, and generate sequences that sound coherent and realistic.

- Training Data: The performance of the models is highly dependent on the training data. Veo 3’s success relies on access to a massive and diverse dataset of:

- High-Quality Audio Samples: Recordings of various environments, objects, and events.

- Metadata and Annotations: Descriptive labels for the audio, providing context (e.g., “rain in a forest,” “city traffic at rush hour”).

- Possibly, other modalities: Data from video or text descriptions might also be used to enhance the AI’s ability to generate sounds matching specific scenes.

- Model Training & Fine-tuning: The developers likely train and continuously refine these models, often using:

- Supervised Learning: Training the model on labeled audio data to understand relationships.

- Unsupervised Learning: Letting the model discover patterns within the data without explicit labels.

- Reinforcement Learning: Using feedback (e.g., from human users or automated evaluation metrics) to guide the model towards generating more realistic and desirable sounds.

- Architecture: Veo 3 probably employs advanced deep learning models, such as:

-

Sound Synthesis Techniques:

- Generative Modeling: Veo 3’s core technology likely uses generative modeling to synthesize sounds. This involves creating sounds from scratch based on learned patterns, rather than manipulating pre-recorded samples.

- Parametric Control: Users (sound designers) probably have the ability to control parameters like:

- Environment type: (e.g., “forest,” “city,” “interior space”)

- Weather conditions: (e.g., “sunny,” “rainy,” “windy”)

- Time of day: (e.g., “morning,” “evening”)

- Specific sound events: (e.g., “birdsong,” “footsteps,” “traffic noise”)

- Object interactions: (e.g., “door creaking,” “glass shattering”)

- Audio Effects Integration: The platform likely integrates audio effects like:

- Reverb: Simulating the acoustics of different spaces.

- Delay: Creating echoes and other temporal effects.

- Equalization (EQ): Shaping the frequency content of sounds.

- Compression: Controlling the dynamic range.

-

User Interface and Workflow:

- Input Methods: Veo 3 probably offers ways for users to:

- Specify desired soundscapes via text prompts, keywords, or descriptions.

- Use visual interfaces to control environmental parameters.

- Import and integrate sounds into existing projects (e.g., DAWs or game engines).

- Real-time Generation: The platform might generate sounds in real-time, allowing for dynamic interactions and immediate feedback.

- Customization and Editing: Users likely have tools to:

- Modify the generated audio: Adjusting volumes, panning, and other parameters.

- Mix and layer sounds: Creating complex soundscapes from multiple generated audio sources.

- Apply further effects using integrated or external plugins.

- Input Methods: Veo 3 probably offers ways for users to:

III. Benefits and Advantages:

- Efficiency & Time Savings: AI-powered sound generation can dramatically reduce the time required to create complex soundscapes. Manual sound design can be extremely time-consuming.

- Scalability: Easily create diverse and adaptable soundscapes for large projects or evolving environments.

- Cost-Effectiveness: Reduce the need for expensive recording sessions, sound libraries, and manual editing.

- Creativity and Exploration: Enable sound designers to experiment with new sonic possibilities and create unique audio experiences.

- Immersive Experiences: Contribute to more realistic and engaging environments in VR, games, and movies.

- Accessibility: Potentially make sound design more accessible to creators with limited resources or technical expertise.

IV. Challenges and Limitations:

- Realism Fidelity: Achieving perfect realism is a significant challenge. AI-generated sounds can sometimes lack the subtle nuances and imperfections that characterize real-world audio.

- Control & Predictability: Fully controlling the precise output of the AI can be difficult. Developers might need to find a good balance between automation and control.

- Over-reliance & Artistic Impact: Over-reliance on AI could potentially diminish the artistic skills of sound designers.

- Training Data Bias: The quality and diversity of training data are critical. Bias in the data can lead to skewed or stereotypical audio generation.

- Computational Requirements: Training and running complex AI models require significant computing power, which can be a barrier to entry.

- Legal & Ethical Considerations: Issues around intellectual property and copyright of the training data are important.

V. Applications and Use Cases:

- Video Games: Creating immersive environments for games of all genres.

- Movies and TV: Sound design for film, TV, and animation.

- Virtual Reality (VR) and Augmented Reality (AR): Generating realistic spatial audio for immersive experiences.

- Music Production: Generating unique soundscapes, textures, and sound effects for music.

- Interactive Media: Creating dynamic and adaptive audio for interactive applications.

- Advertising and Marketing: Developing engaging audio for commercials and promotional materials.

- Audio for Training and Education: Simulating diverse sound environments for educational purposes.

- Accessibility: Developing audio tools for visually impaired people.

VI. Future Trends and Developments:

- Increased Realism: Continuous improvements in AI models and training data will drive ever-more-realistic sound generation.

- Enhanced User Control: More intuitive and powerful interfaces for fine-tuning and manipulating generated audio.

- Real-time Interaction and Adaptation: AI systems that can dynamically respond to user input and changes in the environment.

- Integration with Other AI Tools: Combining audio generation with other AI technologies, such as video synthesis and natural language processing.

- Personalized Soundscapes: AI-driven systems that can create customized audio experiences tailored to individual users’ preferences.

- More Specialized Models: AI models trained for specific sonic domains (e.g., weapons sounds, orchestral scores).

- Collaboration and Community: Platforms that foster collaboration and enable sharing of custom soundscapes and models.

VII. Conclusion:

Veo 3Generate, and similar AI-powered audio tools, represent a significant evolution in sound design. The potential for creating realistic and immersive soundscapes is vast. While challenges remain, the benefits of increased efficiency, creative freedom, and accessibility are undeniable. As AI technology continues to advance, we can expect even more sophisticated and powerful audio generation tools to emerge, transforming the landscape of audio production and the way we experience sound in the digital world. Understanding the underlying technologies and limitations is critical for sound designers and other creatives who want to harness the power of AI for their projects.